Personal Project · October 2025 – Present

CCEM: Building the Environment Manager That Grew Up Alongside Claude Code

When Claude Code Landed, I Had No Way to Watch It Work

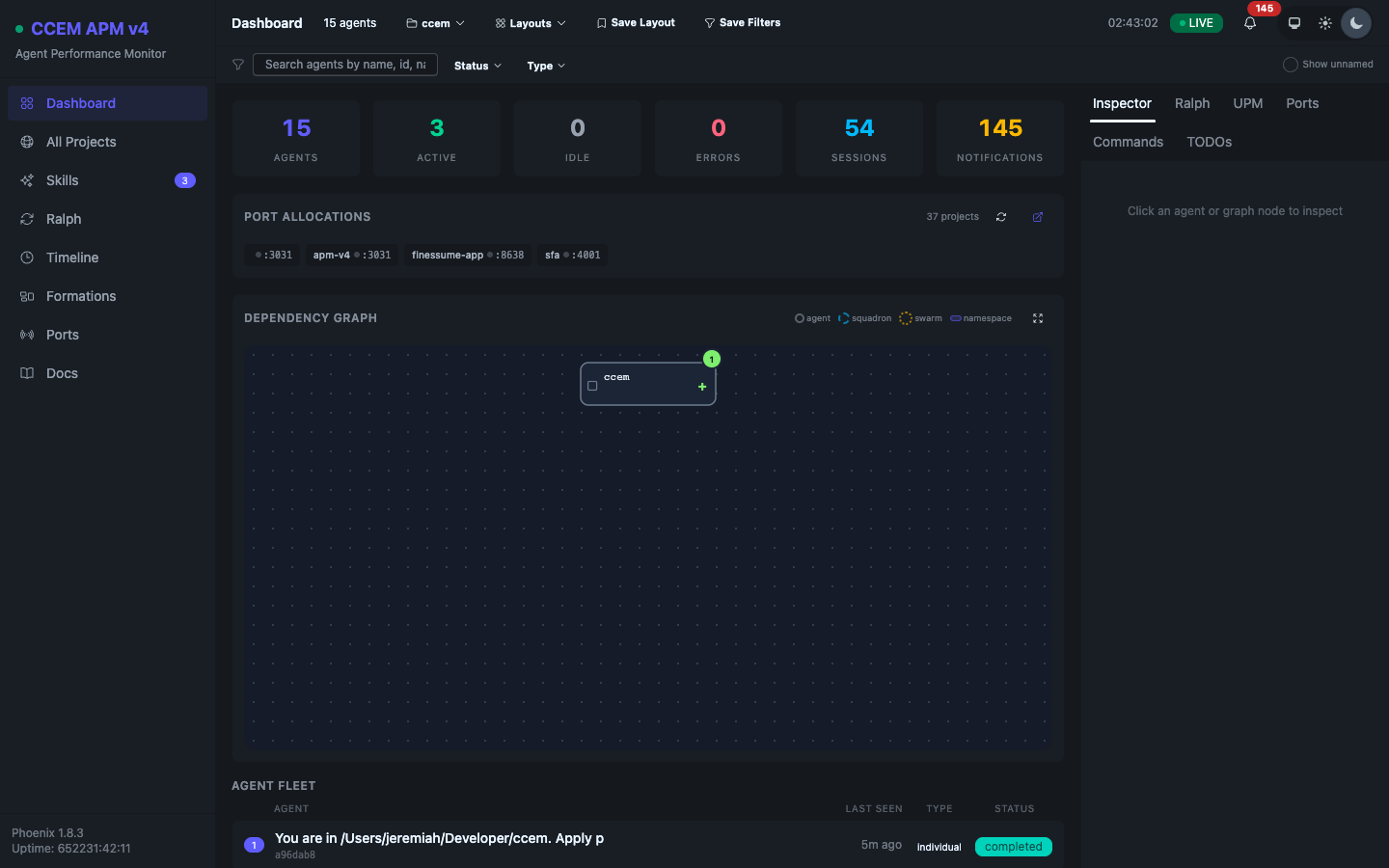

Anthropic released Claude Code — the agentic CLI — into general availability in early 2025.1 Within weeks I was running it across half a dozen active projects simultaneously: finessume, pegues.io, LGTM, VIKI, LFG. The experience was powerful and opaque in equal measure. Every session ran its own agent process, spent its own token budget, and modified its own files. There was no way to see what all of them were doing from a single vantage point.

The first thing I noticed was that sessions would collide — two instances reading and writing the same .claude/CLAUDE.md, each making decisions based on stale context the other had already changed. Config divergence was silent and cumulative. I needed tooling that didn’t exist yet. That gap became CCEM.

A TypeScript CLI to Stop Sessions from Colliding

Version 1.0.0 shipped on October 17, 2025 as @ccem/core: a focused TypeScript / Node.js CLI that solved the immediate problem of safely managing Claude Code config files across concurrent sessions. I built it TDD-strict from the first commit — every phase had a full test suite before any implementation code. Seven commands shipped at v1.0.0:

- ccem merge — five strategies (recommended, default, conservative, hybrid, custom) for reconciling divergent

.claude/configs - ccem backup and ccem restore — compressed archives with SHA-256 checksums

- ccem audit — Zod2-based security scanning of

.claude/configs with severity filtering - ccem fork-discover — conversation history analysis to identify divergence points between sessions

- ccem validate — schema validation against known Claude Code config formats

- ccem tui — an Ink3 interactive terminal UI with 6 views

v1.0.0 released with 543 tests at 92.37% coverage. By December 2025, a React/Express web UI layer and Chrome DevTools MCP4 integration brought the test count to 867 at 96.89% coverage.

.claude/ config directories across concurrent projects. Merge strategies reconcile divergent configs; fork-discover identifies where sessions diverged. Released with a 543-test Vitest suite.

A Proof of Concept That Proved Its Own Inadequacy

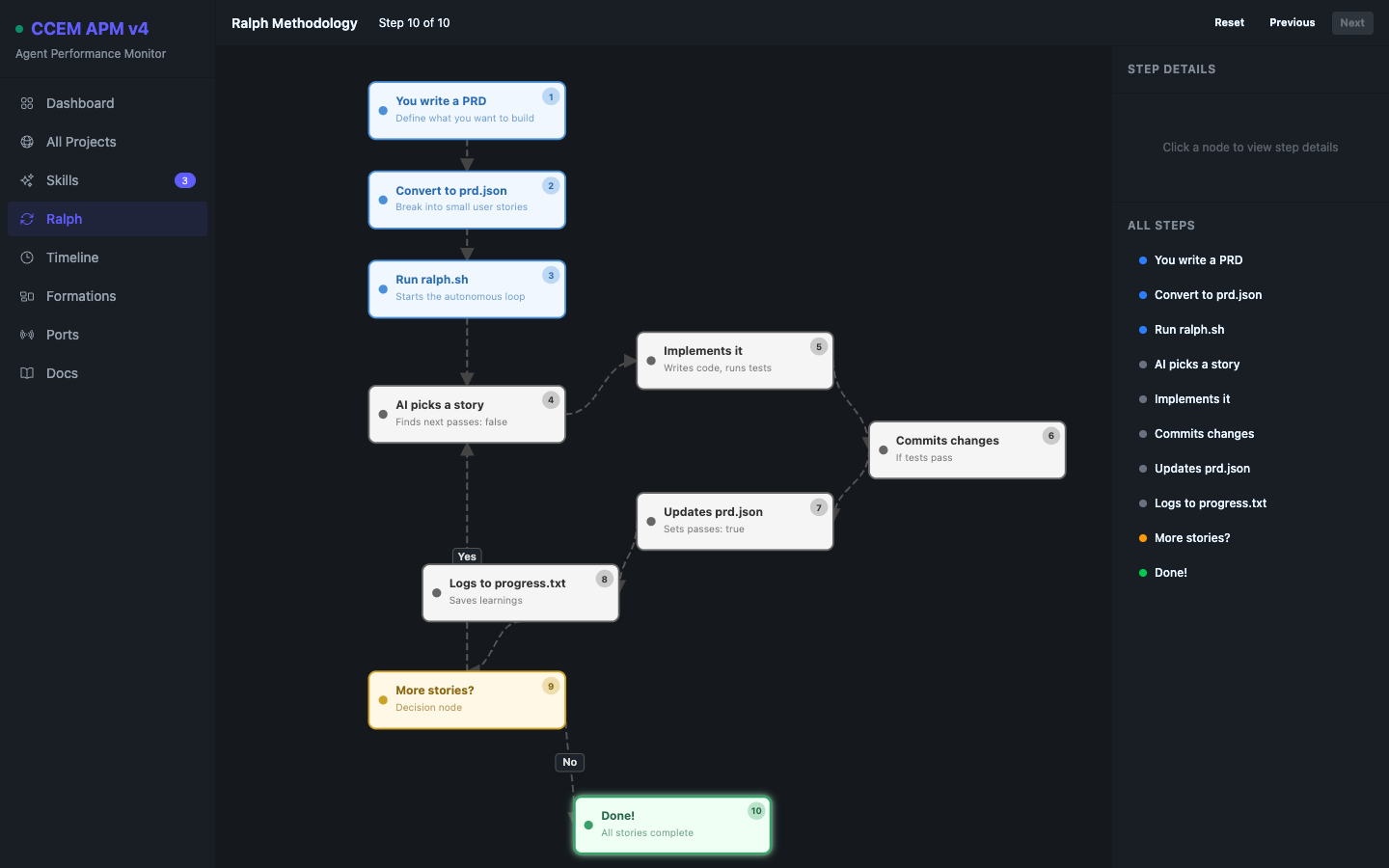

When Anthropic shipped the Claude Code hooks system5 — SessionStart, PreToolUse, PostToolUse, SubagentStart, SubagentStop, SessionEnd — I had a way to push real-time data out of every running session. I built a Python monitor: a single ~500-line file using stdlib http.server with an embedded HTML/JavaScript dashboard, D3.js dependency graphs, and a Ralph methodology6 flowchart.

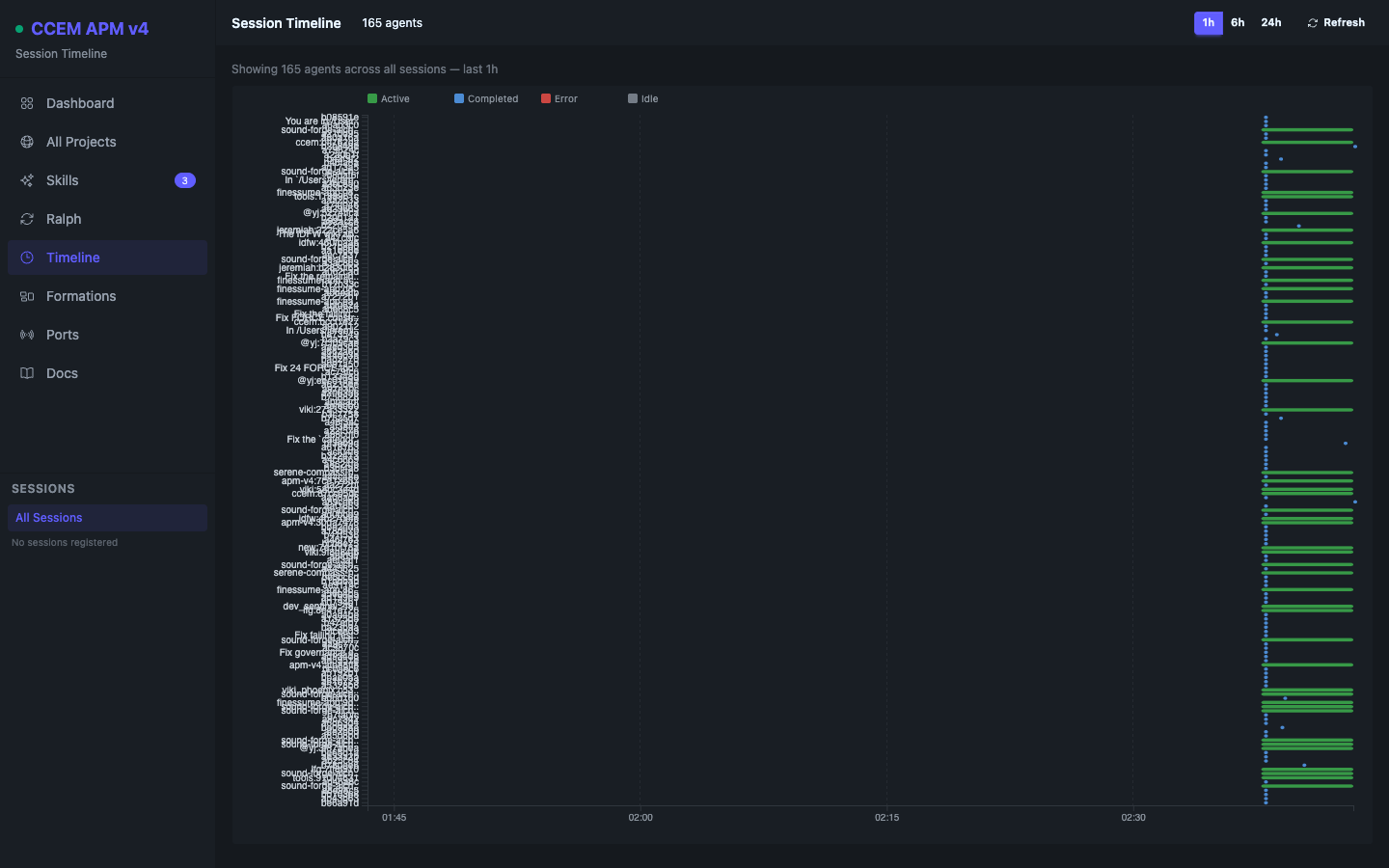

It worked. It also collapsed the moment three sessions fired heartbeats simultaneously. Python’s GIL7 and http.server’s single-threaded request model meant concurrent heartbeats serialized, timestamps drifted, and dashboard state arrived in bursts. Across 10+ active Claude Code sessions, that was unacceptable. The Python monitor wasn’t a failure — it was an exact proof of concept that told me precisely what I needed next: a concurrency model designed for this problem.

Why I Chose Elixir, and Why I’d Choose It Again

I evaluated three paths: Node.js with worker threads, Go with goroutines, and Phoenix/Elixir. The decision was straightforward once I mapped requirements to each runtime’s concurrency model. The requirements were specific: monitor 10+ concurrent AI agent sessions in real time, broadcast dashboard updates via SSE8 to a live dashboard, and do it without a separate frontend framework I’d need to maintain in parallel.

Phoenix/Elixir addressed every constraint at the language level:

- OTP supervision tree9 — each Claude Code session is monitored by its own supervised GenServer. A crash in one session restarts only that process, automatically, without cascading. Fault isolation is the default, not an engineering concern.

- Phoenix PubSub — any GenServer can broadcast to any number of connected dashboards in microseconds, with no polling loop and no message broker infrastructure to operate.

- Phoenix LiveView10 — the dashboard is server-rendered and reactive without React, Vue, or a separate JS build pipeline. I get the interactivity of a SPA with the operational simplicity of a server-side architecture.

- ETS11 — monitoring data is ephemeral and read-heavy. ETS delivers microsecond lockless reads without disk I/O, from any number of concurrent LiveView processes simultaneously.

- Bandit HTTP server — handles thousands of concurrent connections natively, each in its own lightweight BEAM process, with no thread pool tuning required.

Node and Go would have required me to construct the concurrency scaffolding myself. Elixir already had it, stress-tested across decades of telecom infrastructure. I made a deliberate bet on the BEAM VM12 for a domain it was literally built for: monitoring many concurrent processes with guaranteed fault isolation.

HookBridge restarts only that process, leaving AgentRegistry and all active sessions untouched. The full tree spans 30+ GenServers. EventRouter and StateManager are the AG-UI v2 additions shipped in v5.0.0.

The APM Takes Shape: Dashboard, Native Agent, Ralph Flowchart

The Phoenix APM launched in v2.0.0 (November 2025) as a git submodule, on port 3031. I reimplemented every feature from the Python monitor — agent fleet view, D3.js dependency graph, Ralph methodology flowchart — but now backed by OTP processes instead of a polling loop. Every registered Claude Code session has a dedicated GenServer that owns its state; the D3 graph updates in real time via Phoenix PubSub the moment a hook fires.

In December 2025 I added a second component: CCEMAgent, a native SwiftUI macOS menubar app. I wanted the monitoring layer to be ambient — always present without occupying a browser tab. CCEMAgent polls port 3032, surfaces project count, active sessions, and UPM wave progress in the system menu, and uses LaunchAtLogin (ServiceManagement framework) so it starts with macOS automatically. UNUserNotificationCenter delivers native notifications when session states change.

prd.json, surfaced without opening the file.

One Server, Thirty-Eight Projects, Zero Data Leakage

By February 2026, I had 38 active projects registered in apm_config.json. The single-project assumption baked into v2.0.0 had to go. The v2.2.x refactor introduced subdirectory-namespaced sessions in ETS, a project router, and a port manager for clash detection. Each project has its own namespace in the ETS session index — project A’s sessions are completely isolated from project B’s at the data layer, even though they share a single Phoenix process. session_init.sh upserts the active project into apm_config.json automatically on every new Claude Code session start.

The test suite was the forcing function. After the multi-project refactor, v2.3.3 had 193 test failures. I worked through them systematically using a GenServerHelpers pattern that correctly handles OTP’s async message-passing lifecycle in test contexts. Zero failures on release.

v2.2.1 also shipped an OpenAPI 3.0.3 specification covering 50+ endpoints across REST, SSE, and LiveView routes. The v2 API surface added SLOs, alert rules, audit log, and a full v3-compatibility endpoint layer so existing hook integrations required no changes to continue working.

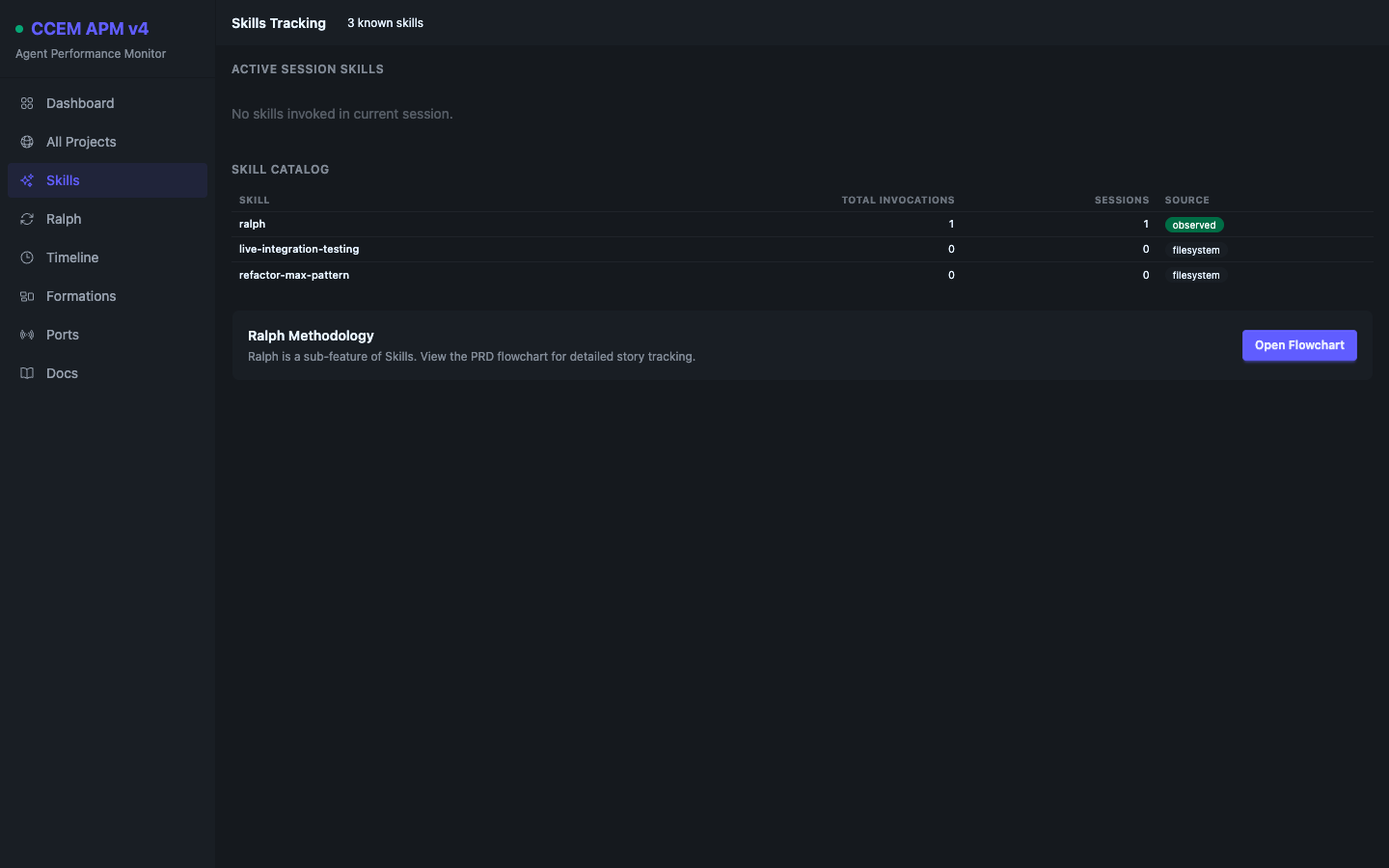

SkillsRegistry GenServer that tracks which skills are active per project, their hook configurations, and last-seen invocation times. Each new platform capability became a new GenServer, not a new service.

Meeting the Protocol Where It Lives: AG-UI Integration

In early March 2026, the AG-UI (Agent-User Interaction) protocol13 emerged as an open standard for streaming structured agent events to frontend UIs. Where CCEM had been using proprietary endpoints (/api/register, /api/heartbeat, /api/notify), AG-UI defined a typed, portable event vocabulary across seven event categories.

I integrated AG-UI in two layers. In v5.0.0, three new GenServers backed an SSE event stream at /api/ag-ui/events: EventRouter (routes and fans out incoming events), HookBridge (translates every legacy hook call into the equivalent AG-UI event automatically — existing integrations got AG-UI emission for free, with zero changes to their hook scripts), and StateManager (maintains canonical agent state using RFC 6902 JSON Patch14 for efficient state diffs).

In parallel, I extracted a standalone Elixir library: ag_ui. Fifteen core type structs, 30 event structs, SSE and Phoenix Channel transports, a middleware pipeline, and an HTTP agent client — all independently testable and ready for publication to hex.pm. The library shipped with 75 tests and zero failures.

HookBridge, which translates to typed AG-UI events. Native AG-UI clients bypass the bridge entirely. EventRouter fans out to subscribers; StateManager maintains canonical agent state as RFC 6902 JSON Patch diffs. The gold dashed path is the AG-UI SSE stream to connected dashboards.

A Three-Component Platform for Agentic Development at Scale

CCEM v5.1.0 is three tightly integrated components built and maintained entirely by me over five months:

- @ccem/core CLI/TUI — TypeScript, Node.js, Ink. 7 commands, 867 tests at 96.89% coverage. Manages config files, merges, backups, security audits, and fork detection.

- APM Server (apm_v5) — Phoenix/Elixir, Bandit, LiveView. 30+ OTP GenServers, 22 LiveView pages, 50+ REST/SSE routes, OpenAPI 3.0.3 spec, ETS session index, PubSub broadcasts. Monitors N concurrent Claude Code sessions across 38 registered projects.

- CCEMAgent — SwiftUI/macOS. Native menubar app, WebKit APM window, UNUserNotificationCenter, LaunchAtLogin, AG-UI chat view and agent management controls (v5.1.0).

Plus a standalone Elixir library (ag_ui) with 75 tests — 15 type structs, 30 event structs, SSE + Phoenix Channel transports — independently publishable to hex.pm.

ag_ui Elixir SDK sits above as an independently publishable library.

What I Shipped and What It Reflects

More than the code, CCEM reflects how I approach engineering: when a new standard or protocol emerges, I don’t wait to see if it gains adoption — I ship an implementation. MCP arrived and I built hook integrations. AG-UI arrived and I extracted a library. Claude Skills emerged and I built a SkillsRegistry GenServer. Each pivot was deliberate, fast, and grounded in the architectural work already done. The bet on Elixir’s actor model paid for itself every time a GenServer crashed and restarted silently while 10 other sessions kept running.

ag_ui_ex — Elixir SDK for the AG-UI Protocol

When AG-UI v1 landed in early 2026, there was no Elixir SDK. The protocol is a natural fit for the BEAM — typed event structs map cleanly to Elixir structs, SSE streaming is trivially composable with Plug, and state management over JSON Patch is a GenServer problem. I shipped ag_ui_ex v0.1.0 in one sprint.

What it ships

- 33 typed event structs with validation

- SSE transport (Plug-based) for streaming to clients

- Phoenix Channel macros for WebSocket delivery

- Composable middleware pipeline

- GenServer state manager — RFC 6902 JSON Patch

- HTTP client for consuming AG-UI event streams

- SSE / JSON encoder with camelCase ↔ snake_case

Resources

- Hex.pm → ag_ui_ex

- GitHub → peguesj/ag_ui_ex

- Upstream PR #1293 — pending review for inclusion in the AG-UI community SDK registry

- 75 tests, 0 failures

Install

def deps do

[

{:ag_ui_ex, "~> 0.1.0"}

]

endmix deps.getQuick Start

alias AgUi.Core.Events

event = %Events.RunStarted{

run_id: "run-001",

thread_id: "thread-001",

timestamp: DateTime.utc_now() |> DateTime.to_unix(:millisecond)

}

{:ok, sse_data} = AgUi.Encoder.EventEncoder.encode(event)

# Stream events in a Phoenix controller

def stream(conn, _params) do

AgUi.Transport.SSE.stream_events(conn, fn send ->

send.(%Events.RunStarted{run_id: "run-001"})

send.(%Events.RunFinished{run_id: "run-001"})

end)

endThe library distils the same pattern applied to CCEM APM v5: let the BEAM’s actor model carry the concurrency burden, keep the event schema explicit and typed, and make the transport layer a thin wrapper over Plug. When upstream PR #1293 is accepted, ag_ui_ex joins Go, Rust, Dart, Kotlin, Java, and Ruby as a first-class entry in the AG-UI protocol community SDK registry.

References & Notes

- Claude Code CLI. Anthropic’s agentic CLI tool that operates in the terminal with full filesystem, git, and shell access. Released in February 2025 (beta) and March 2025 (GA). CCEM was built to manage the operational complexity introduced by running multiple Claude Code sessions concurrently across different projects.

- Zod. A TypeScript-first schema declaration and validation library. Its static type inference produces the TypeScript type and the runtime validator from a single schema definition, eliminating the divergence that occurs when maintaining separate type annotations and validation logic.

- Ink. A React-based library (vadimdemedes/ink) for building terminal UIs using JSX components and Flexbox layout. Ink compiles the same React component model to ANSI terminal output, enabling hooks-based TUI development with the same mental model as web React.

- MCP (Model Context Protocol). An open standard published by Anthropic in November 2024 for connecting AI models to external data sources and tools via a standardized server/client protocol. MCP servers expose capabilities (resources, tools, prompts) that Claude Code sessions can invoke. The Chrome DevTools MCP server enables browser automation and inspection.

- Claude Code hooks. Shell scripts that fire at six lifecycle events:

SessionStart,PreToolUse,PostToolUse,SubagentStart,SubagentStop,SessionEnd. Each hook receives event metadata as JSON via stdin and can call any external process — the integration surface CCEM’s monitoring layer depends on. - Ralph methodology. An autonomous fix loop I developed for Claude Code: a Ralph session receives a

prd.jsonstory definition with acceptance criteria, implements it, runs quality gates (lint, typecheck, tests), marks the storypasses: true, and moves to the next story automatically. The APM’sRalphFlowchartLiveView displays the current story and its status in real time. - Python GIL. The Global Interpreter Lock in CPython prevents true parallel execution of Python threads. Concurrent SSE connections queue behind each other, causing timestamp drift and bursty dashboard updates — a structural problem with no in-language fix for an I/O-concurrent workload at this scale.

- SSE (Server-Sent Events). A W3C standard (EventSource API) for unidirectional HTTP event streaming from server to client. SSE over HTTP/2 multiplexes multiple streams on a single TCP connection. CCEM uses SSE rather than WebSockets because the monitoring data flow is inherently unidirectional and SSE’s reconnection semantics are simpler to manage.

- OTP supervision tree. In Erlang/Elixir, a Supervisor monitors worker processes and restarts them on failure according to a configurable strategy. The supervision tree structure ensures that crashes are isolated, contained, and recovered from automatically — fault tolerance as a language primitive rather than an application concern.

- Phoenix LiveView. A Phoenix framework extension for server-rendered reactive UIs via WebSocket. UI state lives as a GenServer process on the server; diffs are computed server-side and pushed as minimal patches. LiveView delivers near-SPA interactivity without a JavaScript framework or separate build pipeline.

- ETS (Erlang Term Storage). An in-memory key-value store built into the BEAM VM. ETS supports concurrent reads from multiple processes without locking, at microsecond latencies. Monitoring data is ephemeral and read-heavy — ETS is the correct data layer for this access pattern.

- BEAM VM. The Bogdan/Björn’s Erlang Abstract Machine, originally designed for Ericsson telecom switches in the 1980s. Engineered for fault tolerance, soft-realtime response, and millions of lightweight isolated processes. Its actor model — preemptive scheduling, isolated process heaps, message-passing IPC — maps directly onto the problem of monitoring many concurrent AI agent sessions.

- AG-UI protocol. An open standard (ag-ui-protocol/ag-ui on GitHub) for streaming structured agent events from AI backends to frontend UIs. Defines a typed event vocabulary across seven categories (lifecycle, tool, message, state, step, raw, custom), transport layers (SSE and WebSocket), and a state management model based on RFC 6902 JSON Patches. Integrated by CCEM APM in v5.0.0 (March 2026).

- RFC 6902 JSON Patch. An IETF standard defining a JSON format for expressing mutations to a JSON document as a sequence of operations:

add,remove,replace,move,copy,test. Using JSON Patch for agent state updates produces minimal diff payloads rather than full-document replacements — essential for high-frequency streaming contexts where payload size and parse overhead matter.